How YouTube’s recommendations algorithm works

YouTube’s recommendation algorithm is based on machine learning and big data analytics. It uses complex neural networks to process a huge amount of information about user behavior. The main goal of the algorithm is to maximize the time a user spends on the platform, which is directly related to advertising revenue. The algorithm takes into account many factors, including viewing history, preferences, interactions with content, and behavioral patterns.

The key components of the algorithm are:

- Viewing history: which videos the user has watched, for how long, and how often.

- Interactions: likes, dislikes, comments, channel subscriptions.

- Context: time of day, device, frequency of visits.

- Social signals: the popularity of the video among other users.

The algorithm is constantly learning from new data, which allows it to adapt to changing user preferences.

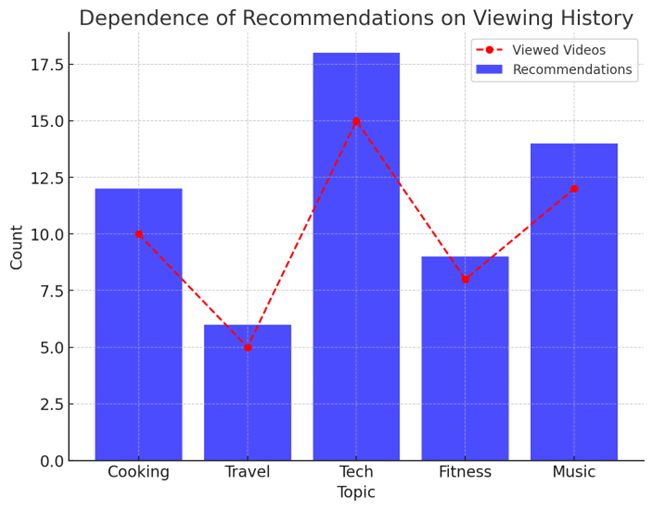

How viewing history influences recommendations

Browsing history is one of the key factors influencing recommendations. The algorithm analyzes which videos the user has watched, for how long, and how often. For example, if a user regularly watches cooking videos, the algorithm will suggest more content on this topic. However, it is important to note that the algorithm also takes into account the viewing context. For example, if a user watches travel videos only on weekends, this can be taken into account when forming recommendations.

In addition, the algorithm pays attention to the completion of viewing. Videos that the user watches to the end are considered more relevant than those that he interrupts. This helps the algorithm better understand what content the user is really interested in.

Fig. 1. Dependence of recommendations on viewing history

The role of likes and dislikes in forming recommendations

Likes and dislikes are clear signals that a user sends to the algorithm. Likes increase the likelihood of similar content appearing in recommendations, while dislikes decrease it. However, research shows that users rarely use the dislike feature, which limits its influence on the algorithm.

The algorithm also takes into account the context of interactions. For example, if a user likes a video after watching it for a long time, this is considered a more significant signal than a like after watching it for a short time. In addition, the algorithm analyzes interaction patterns: if a user regularly likes videos from a certain channel, this may lead to an increase in recommendations from this channel.

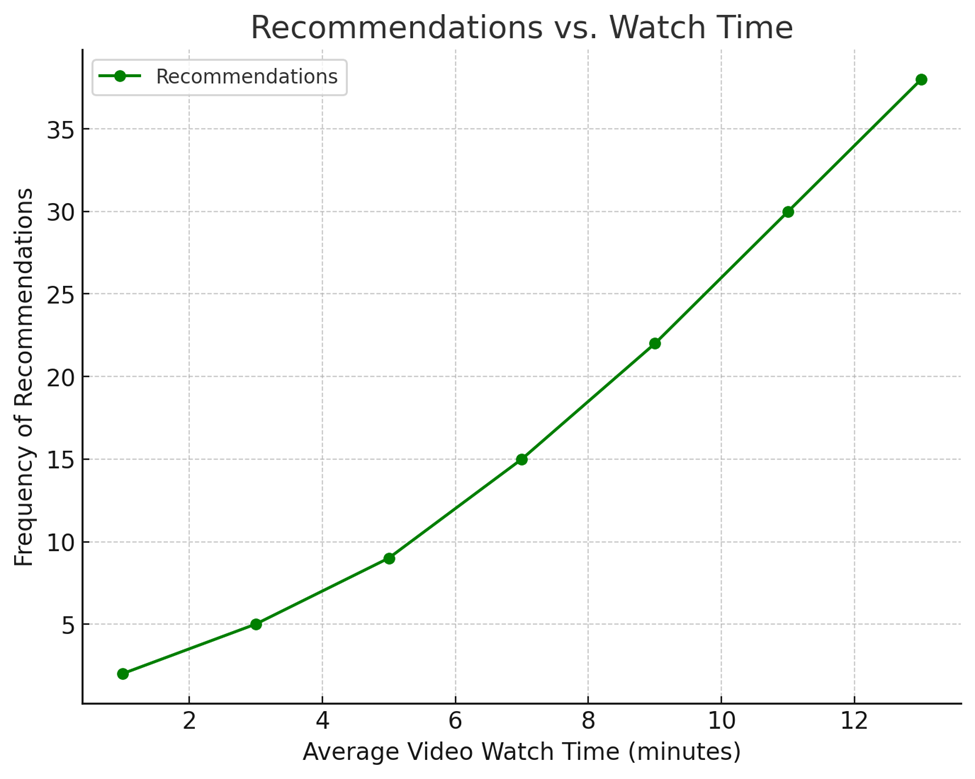

Time spent on the platform as a key metric

YouTube actively uses the metric "watch time" to evaluate the success of recommendations. Videos that hold a user's attention longer are more likely to be recommended to others. This can lead to the promotion of content that evokes strong emotions, even if it does not match the user's interests. For example, videos with provocative titles or content that sparks controversy often hold attention longer, increasing their chances of being recommended.

The algorithm also takes into account viewing sessions – periods of time when a user is actively engaging with the platform. Videos that encourage long sessions are prioritized in recommendations.

Fig. 2. Recommendations vs. Watch Time

Influence of comments and social interactions

Comments and other forms of social interaction (such as channel subscriptions) are also taken into account by the algorithm. Videos that generate active discussions are more likely to be recommended, as this indicates high engagement. In addition, the algorithm analyzes the sentiment of comments: positive comments can increase recommendations, while negative ones can decrease them.

Channel subscriptions also play an important role. If a user is subscribed to a certain channel, videos from this channel will appear in recommendations more often. This creates a feedback loop, where popular channels get even more views.

Use of search as an influencer

User search queries also play an important role in forming recommendations. The algorithm analyzes what queries the user types and uses this information to refine their interests. For example, if a user often searches for videos about healthy eating, this can influence recommendations, even if they rarely watch such videos.

In addition, the algorithm takes into account search results. If a user often clicks on certain videos in search results, this signals their interest in this topic.

Frequency of visits and user activity

YouTube ads influence recommendations, although this often goes unnoticed by users. The algorithm takes into account how a user interacts with an ad: whether they click on it, skip it, or watch it until the end. For example, if a user frequently clicks on fitness-related ads, the algorithm may start recommending videos about working out or healthy eating.

Additionally, ads can influence recommendations indirectly. If a user watches an ad for a particular brand, the algorithm may associate that brand with the user’s interests and suggest content related to that category. However, this influence of ads raises questions about the transparency of algorithms, as users are often unaware that their interactions with ads shape their recommendations.

The "filter bubble" effect and its consequences

The filter bubble effect occurs when an algorithm only offers a user content that matches their existing preferences, limiting their access to diverse information. For example, if a user frequently watches videos on a political topic with a certain slant, the algorithm will offer them more content that confirms their point of view, while ignoring alternative opinions.

This effect increases the polarization of opinions and facilitates the spread of misinformation, since provocative content often holds attention longer. To combat this, YouTube could implement mechanisms that artificially expand the diversity of recommendations and give users more control over their preferences.

Ethical and social implications

YouTube’s personalized recommendations, while intended to improve the user experience, have serious ethical and social implications. One of the main concerns is the spread of misinformation and extremist content. An algorithm optimized to retain attention often promotes content that evokes strong emotions, even if it is false or harmful. This can contribute to a distorted view of the world among users, especially those who lack critical thinking or media literacy.

Another concern is the increase in social polarization. Algorithms that create “filter bubbles” limit users’ access to diverse viewpoints, which can lead to the strengthening of extreme views and a decrease in tolerance for other opinions. For example, users prone to conspiracy theories may increasingly receive content that confirms their beliefs, making it difficult to have constructive dialogue in society.

To address these issues, more transparent and ethical algorithms are needed that take into account not only engagement but also the quality of content. YouTube

could collaborate with independent experts and researchers to develop mechanisms to reduce the spread of misinformation. It is also important to improve the digital literacy of users so that they can critically evaluate recommended content and manage their own information space.

Conclusion

User behavior plays a key role in YouTube’s recommendations, determining what content appears on the homepage and in the recommended sections. The platform’s machine learning-powered algorithms analyze a variety of factors, including viewing history, likes, dislikes, comments, time spent on the platform, and even search queries. However, this personalization also has a downside: it contributes to the creation of a “filter bubble,” where the user is trapped in an isolated information environment that limits their access to diverse viewpoints. This can increase polarization, spread misinformation, and reduce the quality of content as creators are forced to adapt to the demands of an algorithm that maximizes attention.

To address these issues, it is necessary to implement mechanisms that take into account not only engagement but also content diversity. YouTube could give users more control over recommendations, for example by adding the ability to see content from opposing viewpoints or by explaining why a particular video was recommended. It is also important to consider the ethical aspects of how algorithms work, collaborating with experts to reduce the negative impact on society. Users, in turn, must be more aware of how their behavior affects recommendations and actively manage their experience using available tools. Only a combination of technical improvements, ethical principles, and conscious user behavior will make the platform not only fun but also useful for society.

.png&w=640&q=75)